Henry Dietz

http://aggregate.org/hankd/

Department of Electrical and Computer Engineering

Center for Visualization & Virtual Environments

University of Kentucky, Lexington, KY 40506-0046

Original March 22, 2020, Latest Update March 22, 2020

This document should be cited using something like the bibtex entry:

@techreport{paint20200322,

author={Henry Dietz},

title={{Senscape Painting Algorithms}},

month={March},

day={22},

year={2020},

institution={University of Kentucky},

howpublished={Aggregate.Org online technical report},

URL={http://aggregate.org/DIT/PAINT/}

}

The primary publication decribing the senscape painting algorithms is "Senscape: modeling and presentation of uncertainty in fused sensor data live image streams," by Henry Dietz and Paul Eberhart, and presented at IS&T Electronic Imaging 2020. A preprint version is posted here. The slides for this paper are also posted here.

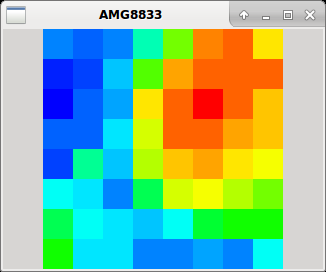

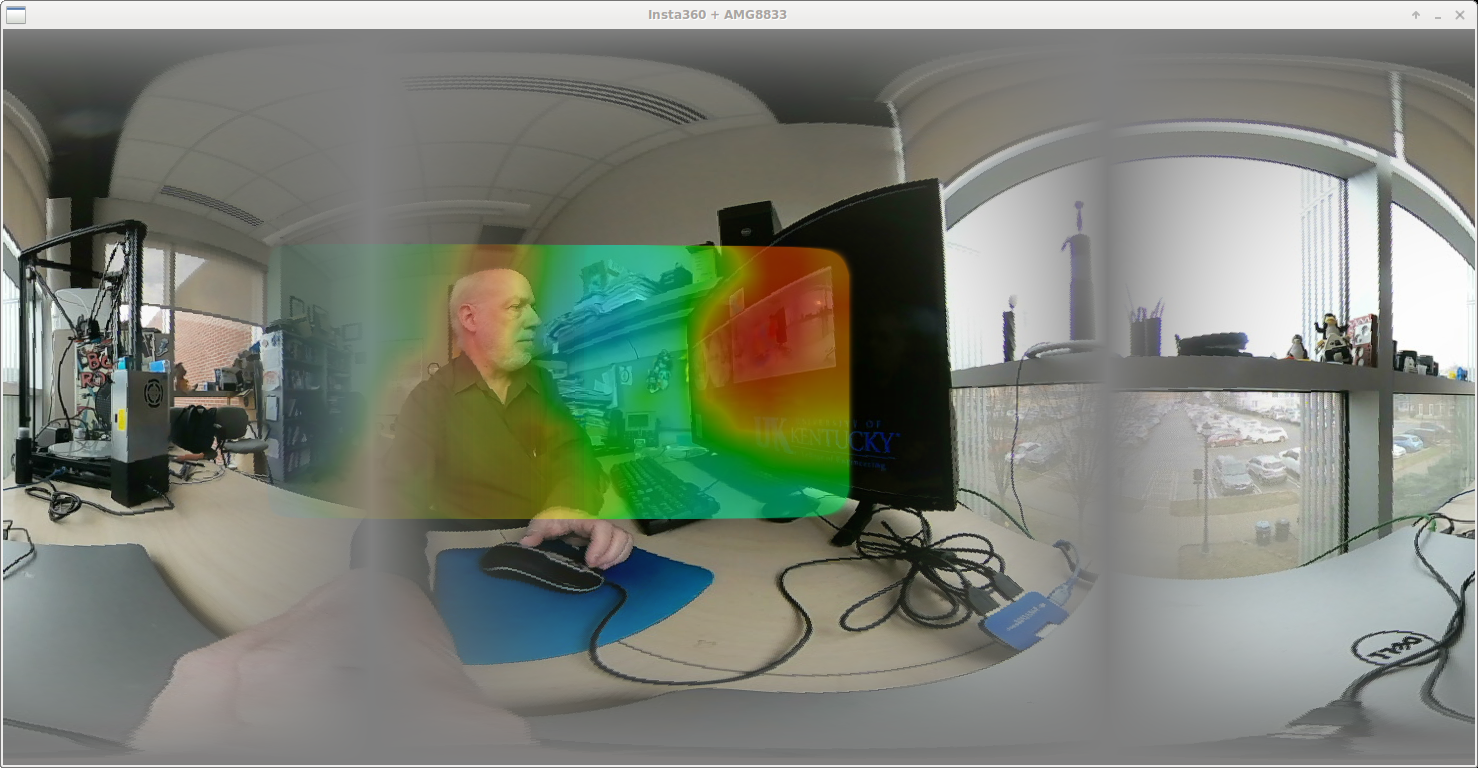

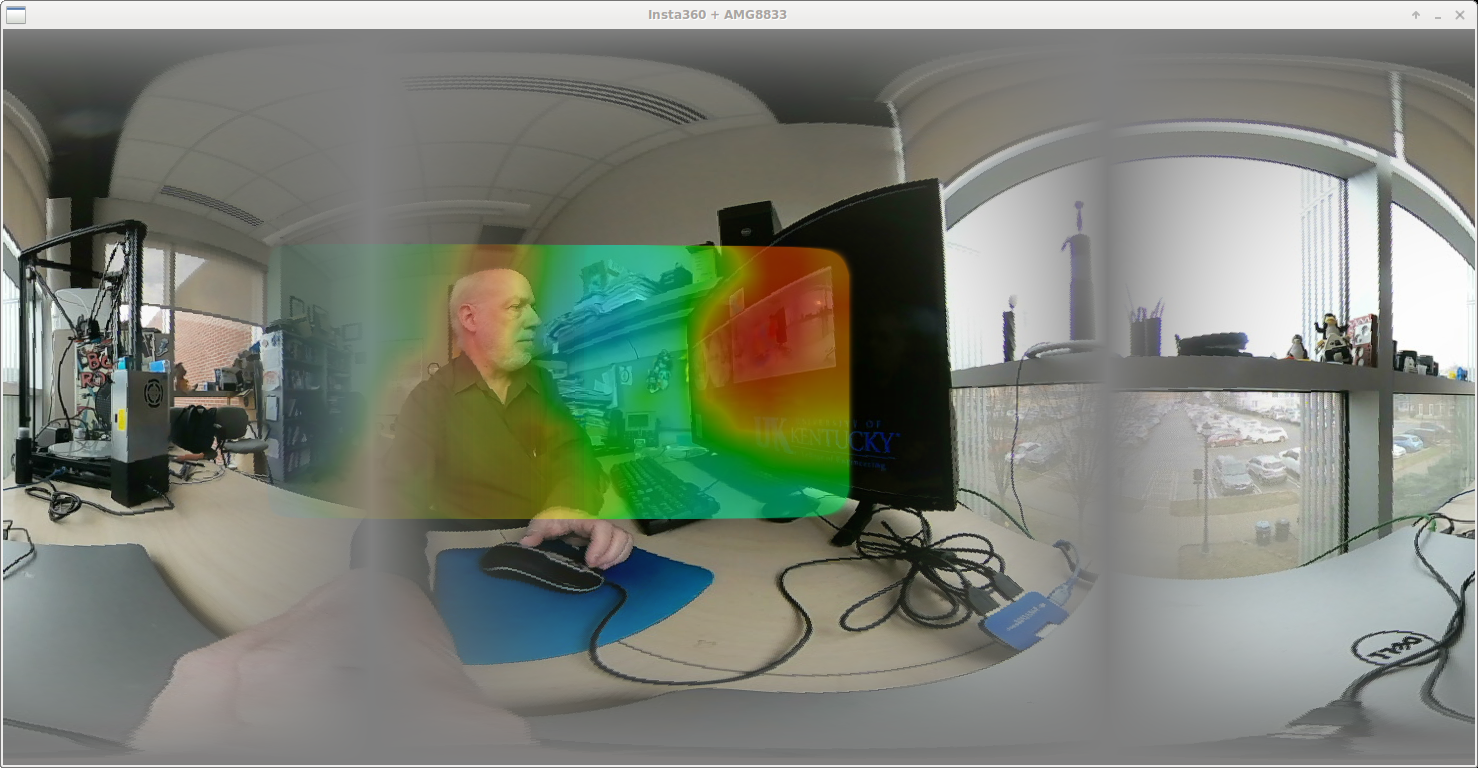

There are two major Senscape examples presented in the EI2020 paper: KVIRP and Wakam. The three images at the top of this paper are from KVIRP. The software for each of these prototype devices is rather crude and virtually undocumented, but does implement the key ideas. We hope to post improved versions, but at least these are usable as is.

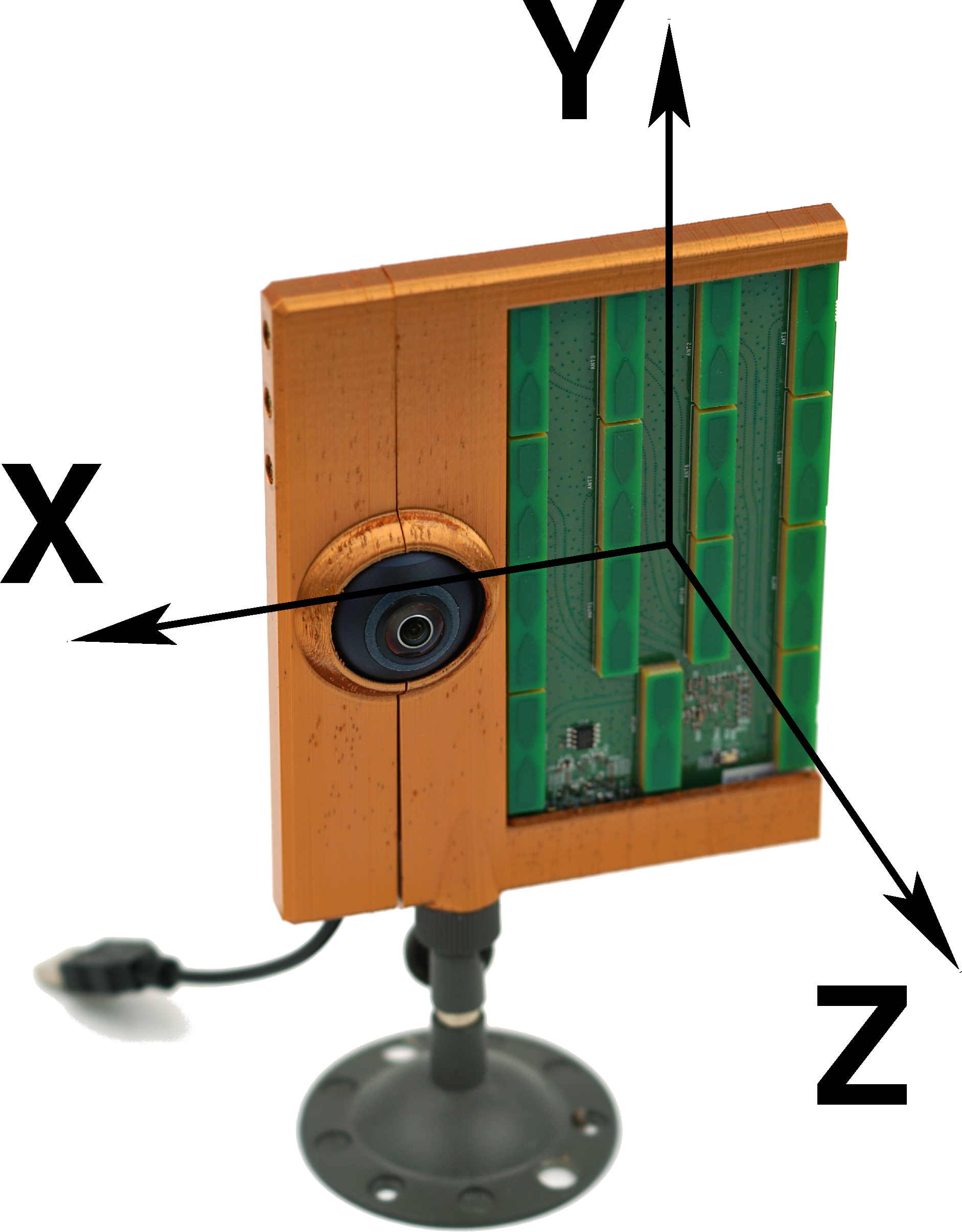

KVIRP, pronounced kay-verp, stands for Kentucky's Visual / Infra Red Painter. Details on how to build the physical unit are given in the above paper, but the code to run it is not.

Although the current code does uncalibrated stitching of the two 180-degree views and the alignment of one image with the next is done very crudely (not using any of the fancier alignment algorithms in OpenCV), it is highly functional. The current software version is 20200625, kvirp.cpp, which assumes the code running on the Arduino interfacing the AMG8833 is amg8833.ino. The original release version, kvirp20200127.cpp, is now obsolete because it uses a different AMG8833 interface protocol. This C++ program requires OpenCV (primarily to manage video decoding and window creation), and can be compiled by:

g++ -I/usr/include/opencv -I/usr/include/opencv2 -L/usr/lib/ -g -o kvirp kvirp.cpp -lopencv_core -lopencv_imgproc -lopencv_highgui -lopencv_ml -lopencv_video -lopencv_features2d -lopencv_calib3d -lopencv_objdetect -lopencv_stitching -lopencv_imgcodecs -lopencv_videoio

When run, kvirp expects stdin to be the serial feed from the thermal sensor via USB, so a minimal command line might be something like:

./kvirp </dev/ttyACM0

The command line options are:

Be aware that defaults for window size, placement, etc. are very dependent on your particular installation of OpenCV. In some cases, the windows created will initially overlay each other -- so if you only see one window, drag it a bit to see what's behind it.

Wakam, pronounced wah-cam, stands for WAlabot (creator) Kentucky cAMera. In many ways, it is very similar to KVIRP -- which makes sense given that it uses an Insta360 Air in much the same way. The catch is that it is fusing target-tracking radar data rather than a thermal image. The radar data comes from a Walabot Creator, which is a pretty interesting device, but has a very crude SDK using raw USB access (you will not see a /dev entry for the Walabot).

Because the Walabot SDK is rather crude and low-level, and the device itself does not behave very consistently, Wakam's performance is nowhere near as impressive as that of KVIRP. It would probably be appropriate to aggressively apply some sort of "diffusion" to spread the depth data from the Walabot for painting, but the current version, from January 28, 2020, simply paints discs with size in proportion to signal strength. However, Wakam does serve as a working proof of concept.

Instructions for building the hardware appear in the EI2020 paper cited above. The software for Wakam consists of wakam.cpp, a relatively simple C++ program that requires both OpenCV and the development library for the Walabot Creator. It can be compiled by:

g++ -I/usr/include/opencv -I/usr/include/opencv2 -L/usr/lib/ -L/usr/lib/walabot -g -o wakam wakam.cpp -D__LINUX__ -lWalabotAPI -Wl,-rpath,/usr/lib/walabot -lopencv_core -lopencv_imgproc -lopencv_highgui -lopencv_ml -lopencv_video -lopencv_features2d -lopencv_calib3d -lopencv_objdetect -lopencv_contrib -lopencv_legacy -lopencv_stitching -lopencv_imgcodecs -lopencv_videoio -L/usr/lib/walabot

The command line and options for wakam are essentially identical to those of kvirp, except the Walabot SDK locates the raw USB device for the Walabot Creator, so stdin is not used.